Why We Build Production-Quality MVPs: Not Prototypes

A common playbook says: ship something scrappy, validate, then invest in “real” engineering. That can work for a narrow, isolated test — but when users bounce because the app is flaky, you often measure the experience, not the business. A throwaway prototype still produces data; the risk is that it is easy to misread.

We build production-quality MVPs from day one. That is not a flex. For us, it is the most reliable way to get metrics you can actually stake a decision on, because product and infrastructure failures stop contaminating market feedback. For a detailed walkthrough of exactly how this process works, see how we validate MVPs in 12 weeks.

The False Economy of Throwaway Prototypes

The logic behind the prototype-first approach goes like this: building a production-quality product takes time and money, so build something cheap first to test the idea, then invest in real engineering once you know it works. In practice, the prototype often gives less separable signal than you hope: users quit because of latency and crashes, not because they evaluated your roadmap.

When prototype-first is still enough: You are testing a single, isolated question (for example demand on a landing page) and you are deliberately not claiming anything about repeat usage or paid conversion in a real product yet.

Real users behave differently on unreliable software. When an app is slow, crashes unpredictably, or has authentication that breaks every third session, users do not give you honest product feedback. They give up. They stop returning not because the value proposition failed but because the experience was too frustrating to continue with. That churn often measures friction in the surface, not the underlying offer.

When you run paid acquisition to a prototype and the numbers look bad, you cannot tell whether the numbers are bad because the market does not want the product or because the product was too broken to evaluate. This is the core problem: the thing you are trying to measure and the artifact doing the measuring are confounded. You cannot separate them after the fact.

What Production Quality Actually Means

Production quality is not a vague standard. For us, it means the following things concretely: the application is deployed on Azure, not a local machine or a development sandbox. There is a CI/CD pipeline from day one: every code change runs through automated checks and deploys through a controlled process. The authentication layer is real, not a hardcoded test account. The database schema is designed to last, not hacked together in an afternoon. Error monitoring and alerting are configured so the team knows when something breaks before a user has to report it.

None of this is gold-plating. Every item on that list addresses a specific failure mode that corrupts validation data. If authentication is flaky, users cannot reliably return to the product after their first session. Your retention data is meaningless. If the database schema was not designed thoughtfully, you cannot run the queries you need to understand user behavior. If there is no error monitoring, you are flying blind during the most data-critical period of your entire company.

The technical foundation shapes the quality of everything measured on top of it. Get the foundation wrong and every metric you collect is suspect.

The Data Integrity Problem

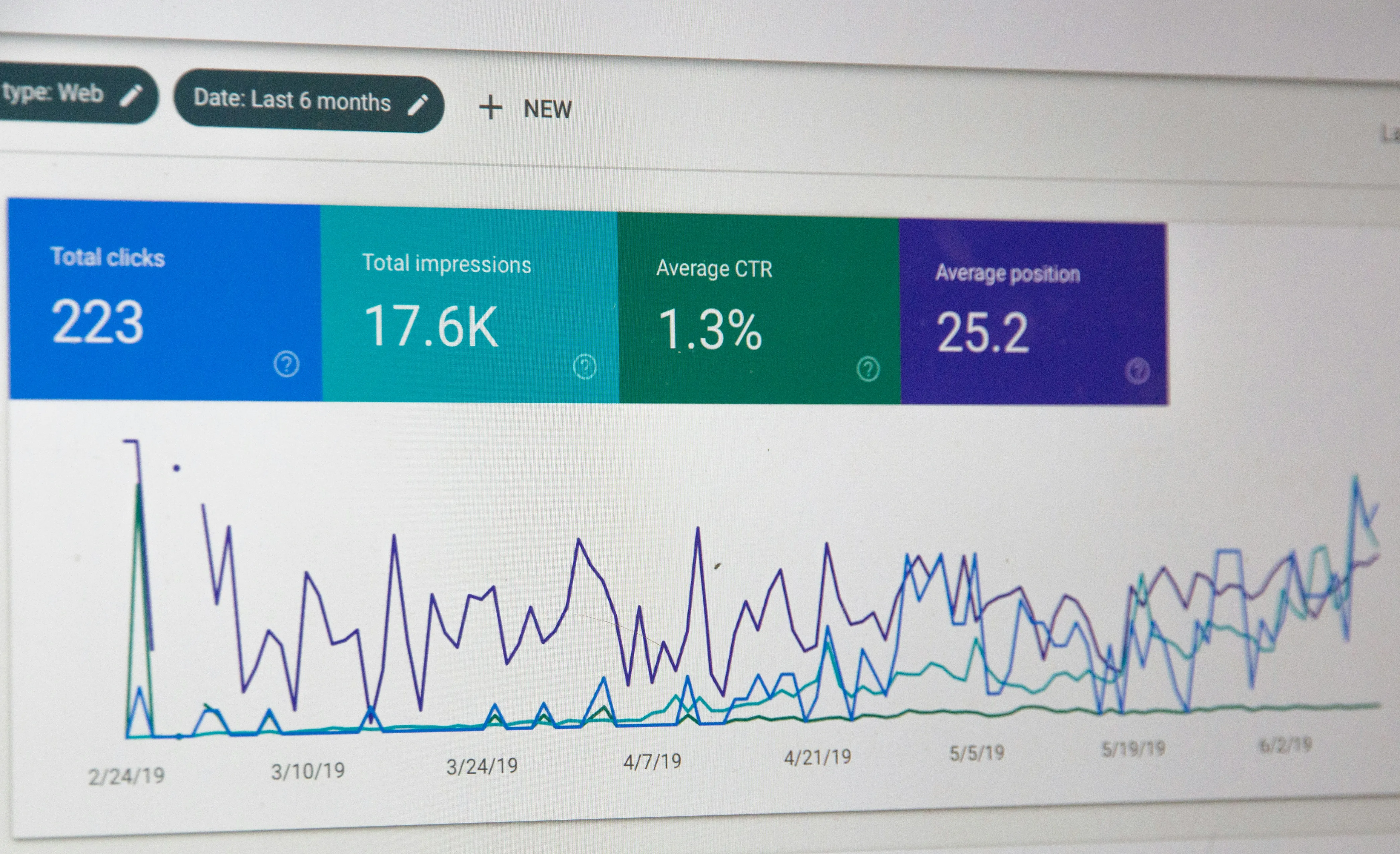

Consider a concrete example. You have built a prototype of a B2B SaaS tool and you run Google ads against it. You get 200 trial signups. Thirty percent of them use the product once and never come back. Conversion from trial to paid is 2%.

You now have to interpret those numbers. Is 30% one-session churn a sign that the product does not deliver enough value? Or is it a sign that the onboarding broke for a third of users and they gave up before they saw the value? Is 2% trial-to-paid conversion a product-market fit problem or a pricing problem or an onboarding problem? You do not know. And critically, you cannot go back and find out. The moment has passed.

If the product had been reliable from the start (real auth, real error handling, proper monitoring), you would know exactly where users dropped off, whether they encountered errors, and which parts of the product they actually engaged with. That information is the entire point of the validation exercise. Without it, you have spent money to learn almost nothing.

The Rebuild Cost That Never Gets Accounted For

The financial case for prototype-first usually ignores the back half of the math. Building a prototype costs X. Building a production system costs Y. So prototype-first costs X + Y, whereas building production-first costs just Y, right?

Not quite. In practice, “migrating the prototype to production” often means a full rebuild: a rule of thumb from many projects, not a law of physics. The database schema designed for speed will not survive a proper data model review. The authentication hack will not pass a security assessment. The monolithic deployment that runs fine on a single server will not scale under real load without significant rearchitecting. The team that built the prototype fast and cheap built it with decisions that made sense for a prototype and do not make sense for a production system. Those decisions are embedded throughout the codebase. Pulling them out is harder than starting over.

The actual math is: prototype cost + rebuild cost (which is close to Y anyway) + the cost of the time you spent running the prototype in production before you admitted it needed to be rebuilt. That is significantly more than just building it right the first time.

Why We Don’t Build Prototypes

Our business model is structured around equity as much as cash fees. When we take equity in a company, the product we build is the product that company goes to market with. There is no throwaway phase because we are not paid to do two rounds of work. We are paid to validate the idea, and we need the validation data to be real.

This aligns incentives in the right direction. We want to build production quality because we want to know whether the product actually works in market. Not whether it works in a demo environment. Not whether early adopters can tolerate a rough experience. Whether the product, deployed on real infrastructure and acquired through real commercial channels, creates enough value that users will pay for it and come back.

That answer is only available if the product is reliable enough to deliver it. So that is what we build.

Who This Matters For

If you are at the stage of needing external validation, if you are about to raise a seed round, bring in a co-founder, or commit significant personal capital, the quality of your validation data is not an engineering question. It is an existential one. A go decision based on prototype data is often a go decision based on ambiguous reads. The risk is not just technical debt. The risk is making a bet on the wrong information.

Production quality from day one is how you make sure the data you collect means something. It is why we do it, and it is why every other approach leaves you with a harder call to make when it counts.

Next step: discovery call

On the call we cover:

- the hypothesis you want to test and whether production quality is worth it;

- what our 12-week flow would look like for your case;

- fit for your stage, or whether a lighter entry point makes more sense.

Written by

Aurum Avis Labs

Builds and ships at Aurum Avis Labs. Writes here about what we learn working with founders and SMEs in the DACH region.

Related Articles

You might also be interested in these articles

How We Validate MVPs in 12 Weeks

Twelve weeks, four phases: build, paid distribution, data-led iteration, documented recommendation grounded in evidence. How our Product Validation Package runs end to end.

When Your No-Code App Needs a Real Tech Partner

Lost deals, slow trust, Zapier maintenance: four migration triggers. What a tech partner does differently from pure delivery.